Every once in a while I get people asking me what’s taking me so long to open my startup Inkzee to the public. They also ask me what exactly have I been doing as the web seems exactly the same. I normally answer that things aren’t easy, that it takes time, specially if you are alone, like I am. After a while I end up explaining my problems with scalability and that’s the point where people just can’t follow you. I’m going to explain here what are scalability problems and how deep the repercussions are for a small company.

Most web applications, like Inkzee, Facebook, Twitter, … are made of 2 parts. What we, the tech nerds, call frontend and backend. The frontend is the part of the application that’s exposed to the users, that is, the user interface (UI), the emails, the information that is shown. All that UI is a mix of different programming codes, let it be PHP, javascript, html, etc. The frontend is in charge of drawing the UI on the user’s screen and to display all the information the user is expecting from the application. But this information has to come from somewhere, well, that’s the backend.

The backend are all the programs and software applications that run behind the scenes and that are in charge of generating, maintaining and delivering the information the frontend displays to the user. The backend can be very homogeneous or very heterogeneous, but it’s normally comprised of 2 parts, the database (where the information and data is stored) and the software that deals with that database, does the data crunching and connects this to the frontend.

Now, some web applications have a barebone backend, very simple and light weighted. Normally some software that gets what the user inputs on the interface and stores it in the database and viceversa, retrieves it from database and shows it to the user. Other web applications have an extremely complex backend (i.e Twitter, Facebook, …). These not only manage the data retrieval, but have to do really complex operations with the data. Not only complex, but very expensive operations in terms of computational power. For example, each time a user uploads a picture to Facebook follows this path:

- The picture is stored in a specific hard drive. The backend has to determine which hard drive corresponds to that user (yes, there are multiple hard drives and each one is assigned to a bunch of users so the load is distributed).

- Once stored, the picture is sent to a processing queue where it will be turned into a thumbnail by an image processing software. This process is expensive as it has to analyze the picture and reduce it to a smaller representation if the image but still maintaining part of its quality.

- After processing it, the backend stores the newly created thumbnail in the database and stores, both the picture and the thumbnail in an intermediate “database” in memory for faster access (cache). This is because it’s faster to retrieve data from memory than from a hard drive.

This is an approximation of what a picture does when you upload it to a social network. I’m pretty sure it goes through a lot more processes though. So, supposing 1% of a social network’s users are uploading pics at any single moment, imagine uploading ~20 photos per user, 2.5 million users at the same time (Facebook has around 250 million users currently). Trust me when I tell you, that’s a lot of data crunching.

The problem

The best user interfaces (frontend) are designed so that all that complexity that goes behind the scenes is never showed to the end user. The problem is that the frontend depends gravely on the backend. If the backend is slow, the frontend won’t be able to have the info the user is requesting or expecting and it will seem SLOW to the end user. Not only slow, but in many cases inefficient or just not available to use at all (meet the Twitter Fail whale :P).

So, now, what will cause the backend to be slow? Ohhhhhh don’t get me started!! There are so many reasons why the backend might be slow or broken! But, most of them are triggered by growth. That is, as the web application is being used by more and more users, the backend will start to fall apart. That’s what, in the tech world is known as scalability problems. That is, the backend can’t scale at the same speed the users pour into the application. The problem is that it’s not only a problem of more users, but having users that interact more heavily with the site. For example you might have 100,000 active users but never had experience big scalability problems. Suddenly you release a feature that allows your users to share pictures more easily… BAM!! Your backend goes down in 10 minutes. Why!! Why?!! you might scream while you watch your servers go down in flames. After all you have the same amount of users, so what happened? Well, most probably your backend system that handles picture sharing was designed and tested only with few users. Now it chokes with the big deal.

The REAL problem

Once you have scalability problems, the next logical step is to find where the bottleneck is and why is it happening. This, which might seem very easy, isn’t at all. It’s like looking for a needle in a haystack. Big backends are normally  VERY complex with many parts coded in different programming languages by different persons. Not only that, but sometimes problems arise in different parts of the backend. So after a couple of really stressful hours you find the bottlenecks and think of a solution to fix them. Ahh my friend, then you realize it’s not as easy to fix as you thought. First of all, you have no clue if the fixes your team has come up with are good enough. Why? Because you’re stepping into unexplored territory. Few persons have had to tackle a similar problem and even less people have dealt with your data and systems. So even if you find someone else with the same problem, the solution might be slightly different depending on what systems you use for your backend or which architecture you have. This is the point where you realize that developers aren’t engineers, but craftsmen and that fixing these problems isn’t exactly a science but black voodoo magic.

VERY complex with many parts coded in different programming languages by different persons. Not only that, but sometimes problems arise in different parts of the backend. So after a couple of really stressful hours you find the bottlenecks and think of a solution to fix them. Ahh my friend, then you realize it’s not as easy to fix as you thought. First of all, you have no clue if the fixes your team has come up with are good enough. Why? Because you’re stepping into unexplored territory. Few persons have had to tackle a similar problem and even less people have dealt with your data and systems. So even if you find someone else with the same problem, the solution might be slightly different depending on what systems you use for your backend or which architecture you have. This is the point where you realize that developers aren’t engineers, but craftsmen and that fixing these problems isn’t exactly a science but black voodoo magic.

So, here you are, with a bunch of possible fixes to a problem but with no clue if they will really work or it will just be a patch that will need extra fixes in 2 weeks. Normally you try to benchmark the solutions, but that’s not an easy task, specially because you have no real load to test it against except in your production servers and no, you don’t want to fuck the productions servers more than they are.

Finally, after some black magic and some simple testes you cross your fingers and try the fix on the production servers. After several hours of monitoring the backend for new “leaks”, you scream of happiness as the patch seems to work. Then you start to realize that the patch won’t hold on forever and that you need some extreme solution to the problem.

You sit down with your tech team (our on your own as it’s my case 😦 ) and you start drafting a new solution. Suddenly you realize that the best fix implies changing the way your backend works. And by change I mean, you need to redevelop a big chunk of your backend to fix the problem. This implies a couple of things, you’ll need to invest a lot of time and resources, you’ll loose the stability your backend had (prior to the incident), you’ll walk into a new unexplored territory for your team and worst of all, you can’t just unplug your production servers and change the backend, you need to do it so both backends coexist for a while until you switch all of your servers from using the old one to the new one.

Now, the REAL problem is that this change, this new redesign grinds the whole company to a halt. All  resources, let it be people or money are invested in redesigning efforts so nothing new can be done. Most outsiders just don’t understand the depth of this change and will bash the company for not doing new things, for not releasing new features, for not fixing old bugs, etc. Not only that, investors will start to get anxious and will demand things to start moving. So, the outside world only sees that you’ve stalled, while the inside teams are suffering the pressure. Not only that, developers inside the company will get extremely frustrated by the pace of things. They won’t be able to add new features and even when fixing bugs they’ll need to fix them twice, one in the old backend, one in the new backend.

resources, let it be people or money are invested in redesigning efforts so nothing new can be done. Most outsiders just don’t understand the depth of this change and will bash the company for not doing new things, for not releasing new features, for not fixing old bugs, etc. Not only that, investors will start to get anxious and will demand things to start moving. So, the outside world only sees that you’ve stalled, while the inside teams are suffering the pressure. Not only that, developers inside the company will get extremely frustrated by the pace of things. They won’t be able to add new features and even when fixing bugs they’ll need to fix them twice, one in the old backend, one in the new backend.

So, in the end, you realize the shit hit the fan and you got all of it. It’s hard, very hard to be there. If you haven’t experienced it you have no idea how hard it is. Not only as a developer but as a founder, CEO, or executive position you’ll feel the pain. You won’t be able to publicize your site cause more stress might accelerate the old backend problems, you can’t give users new features because you have no resources, you will try to explain the problem to investors but they won’t understand a clue of what you’re talking about… “backend what?”. Current customers will be pissed at you because the site is running slow and you are doing nothing to fix it. So, in the end, everything freezes until the new backend is in place.

How long does this takes? Depends. Depends on the size of the redesign, the size of the tech team, the skills of the team and specially, the skills of the management. During this phase, management must execute impeccably. Sadly, this is not the case in most places and so priorities are changed, mistakes are made and the redesign gets delayed over and over again.

It takes a very good leadership to make it through this period. Someone that knows where their priorities lie and that is able to foresee the future and the importance of the task ahead. Needless to say that such figure is lacking in most companies. That’s the reason it took so long for Twitter to pull their act together, to speed up Facebook, etc.

I am there, I am suffering the redesign phase (twice now). It’s hard, it’s lonely, it’s discouraging and frustrating, but it needs to be done. I just wrote this post so that outsiders can get a glimpse of what is it to be there and how it affects the whole company, not just the tech department. Scalability problems aren’t something you can discard as being ONLY technical, it’s roots might be technical but its effects will shake the whole company.

Let there be light 🙂

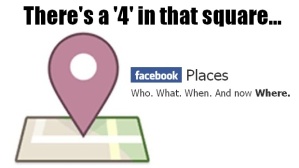

Facebook. They need something that you can only get on Foursquare and not from Facebook. I’m not sure if that’s something they’re going to be capable of doing if Facebook plays their cards correctly. Any new feature that gets any traction can be easily replicated by Facebook. They only possible way is to make something so different from the Facebook genoma that they won’t be able to replicate it because it goes against the Facebook strategy.

Facebook. They need something that you can only get on Foursquare and not from Facebook. I’m not sure if that’s something they’re going to be capable of doing if Facebook plays their cards correctly. Any new feature that gets any traction can be easily replicated by Facebook. They only possible way is to make something so different from the Facebook genoma that they won’t be able to replicate it because it goes against the Facebook strategy.

VERY complex with many parts coded in different programming languages by different persons. Not only that, but sometimes problems arise in different parts of the backend. So after a couple of really stressful hours you find the bottlenecks and think of a solution to fix them. Ahh my friend, then you realize it’s not as easy to fix as you thought. First of all, you have no clue if the fixes your team has come up with are good enough. Why? Because you’re stepping into unexplored territory. Few persons have had to tackle a similar problem and even less people have dealt with your data and systems. So even if you find someone else with the same problem, the solution might be slightly different depending on what systems you use for your backend or which architecture you have. This is the point where you realize that developers aren’t engineers, but craftsmen and that fixing these problems isn’t exactly a science but black voodoo magic.

VERY complex with many parts coded in different programming languages by different persons. Not only that, but sometimes problems arise in different parts of the backend. So after a couple of really stressful hours you find the bottlenecks and think of a solution to fix them. Ahh my friend, then you realize it’s not as easy to fix as you thought. First of all, you have no clue if the fixes your team has come up with are good enough. Why? Because you’re stepping into unexplored territory. Few persons have had to tackle a similar problem and even less people have dealt with your data and systems. So even if you find someone else with the same problem, the solution might be slightly different depending on what systems you use for your backend or which architecture you have. This is the point where you realize that developers aren’t engineers, but craftsmen and that fixing these problems isn’t exactly a science but black voodoo magic. resources, let it be people or money are invested in redesigning efforts so nothing new can be done. Most outsiders just don’t understand the depth of this change and will bash the company for not doing new things, for not releasing new features, for not fixing old bugs, etc. Not only that, investors will start to get anxious and will demand things to start moving. So, the outside world only sees that you’ve stalled, while the inside teams are suffering the pressure. Not only that, developers inside the company will get extremely frustrated by the pace of things. They won’t be able to add new features and even when fixing bugs they’ll need to fix them twice, one in the old backend, one in the new backend.

resources, let it be people or money are invested in redesigning efforts so nothing new can be done. Most outsiders just don’t understand the depth of this change and will bash the company for not doing new things, for not releasing new features, for not fixing old bugs, etc. Not only that, investors will start to get anxious and will demand things to start moving. So, the outside world only sees that you’ve stalled, while the inside teams are suffering the pressure. Not only that, developers inside the company will get extremely frustrated by the pace of things. They won’t be able to add new features and even when fixing bugs they’ll need to fix them twice, one in the old backend, one in the new backend. and personal information in danger? I’ve been working for some time in the information security industry and I’ve seen many crazy things. Due to the recent popularity of social networks, we are beginning to see a shift on which information gets stolen. At first the bad guys targeted big company servers, nowadays exploiting remote bugs on current operative systems is getting much harder (thanks to things like ASLR, Non exec stacks, grsec, etc.). That’s why the bad guys are focusing on hacking browsers and their web applications. Each day we spend more and more time playing, working and using web applications, gradually incrementing the time we are exposed to them. Given the fact that the use of social networks is expanding at an incredible rate and that part of the experience consists in giving away our personal and private data, we have a ticking bomb on our hands.

and personal information in danger? I’ve been working for some time in the information security industry and I’ve seen many crazy things. Due to the recent popularity of social networks, we are beginning to see a shift on which information gets stolen. At first the bad guys targeted big company servers, nowadays exploiting remote bugs on current operative systems is getting much harder (thanks to things like ASLR, Non exec stacks, grsec, etc.). That’s why the bad guys are focusing on hacking browsers and their web applications. Each day we spend more and more time playing, working and using web applications, gradually incrementing the time we are exposed to them. Given the fact that the use of social networks is expanding at an incredible rate and that part of the experience consists in giving away our personal and private data, we have a ticking bomb on our hands. The social ad platform is structured around two ideas, brand awareness and friend’s trust. Some days ago I was discussing with a friend what this announcement really meant to Google. Would Google’s ads revenue be damaged by it? After reading today’s news I understand that Facebook is trying to build a brand awareness machine. This means that the objective for advertising in Facebook would be different from that of Google’s adSense network (based on purchase intentions). Now, the question is, which one will bring more revenues to advertisers? Generally speaking, it’s harder to trace the effectiveness of brand awareness ads than Google ads, so will the investment pay off for marketers?

The social ad platform is structured around two ideas, brand awareness and friend’s trust. Some days ago I was discussing with a friend what this announcement really meant to Google. Would Google’s ads revenue be damaged by it? After reading today’s news I understand that Facebook is trying to build a brand awareness machine. This means that the objective for advertising in Facebook would be different from that of Google’s adSense network (based on purchase intentions). Now, the question is, which one will bring more revenues to advertisers? Generally speaking, it’s harder to trace the effectiveness of brand awareness ads than Google ads, so will the investment pay off for marketers? I’ve been ranting about for some time now. People aren’t really aware of the value of their personal information or the wealth of information they put on the Internet. But, most people that are screaming right now about this, should read the Facebook’s

I’ve been ranting about for some time now. People aren’t really aware of the value of their personal information or the wealth of information they put on the Internet. But, most people that are screaming right now about this, should read the Facebook’s